AI voice analytics takes raw audio from calls, meetings, and conversations and transforms them into structured data to reveal everything your business needs to grow. Customer sentiment and behavioral trends, agent performance, compliance risks, and business intelligence are all thoroughly examined and analyzed at scale. This technology is a must-have if your organization views customer experience (CX) and operational excellence as key drivers of long-term growth.

The global call center market was valued last year at $352.4 billion[*], yet most organizations are said to just look into 1-2% of their customer interactions using traditional manual sampling.[*] AI voice analytics is here to extract insights from all your interactions, changing paradigms, and ensuring all customer voices are heard.

- Overview

- AI Analytics vs Traditional

- How It Works

- Top Providers

- Benefits

- Use Cases

- Choosing a Solution

- Risks and Tradeoffs

- FAQs

What Is AI Voice Analytics?

AI voice analytics uses artificial intelligence (AI) to automatically analyze 100% of spoken conversations, extracting key information like customer sentiment, agent performance, conversation trends, and additional insights from unstructured data, at scale. While traditional speech analytics provides basic conversation transcription and keyword spotting, AI-powered systems leverage deep learning models to understand conversational context, emotional nuance, and intent. It transforms hours of recorded audio into structured data, surfacing compliance risks, revenue opportunities, and quality issues.

AI voice analytics works on several moving key components like:

- Transcription engines: Advanced ASR (Automatic Speech Recognition) systems are much more accurate than standard transcription tools, capable of converting speech to text quickly even with multiple speakers, accents, and background noise

- Sentiment and emotion detection: Analyzes conversations across multiple dimensions, including positive/negative/neutral sentiment, specific emotions (frustration, satisfaction, urgency, confusion), and emotional intensity shifts throughout the call

- Natural language processing (NLP) models: Extracts customer intent, identifies compliance risks, detects competitive mentions, flags churn indicators, and clusters conversations by topic. Advanced systems perform entity recognition (product names, account numbers) and relationship extraction

- Acoustic analysis: Measures speech rate (words per minute), silence duration, interruption patterns, pitch variance, voice stress levels, and energy fluctuations – key indicators of confidence, agitation, or disengagement

All of these elements coalesce into a powerful tool that takes your unstructured audio data and makes it something searchable, analyzable, and relevant to business decisions.

AI Voice Analytics vs. Traditional Speech Analytics

The two terms get used interchangeably, but they describe different generations of the same technology. Traditional speech analytics focuses on keyword spotting and basic transcription. It tells you which words were said and how often. AI voice analytics adds context, emotion, and intent on top of that foundation, using machine learning models to interpret what those words actually mean in a given conversation.

Here's how they compare in practice:

- Transcription depth: Traditional speech analytics produces a text record. AI voice analytics produces a speaker-separated, timestamped transcript with metadata and searchable tags

- Sentiment: Traditional tools flag positive or negative words. AI voice analytics detects emotional states across the full conversation using both text and acoustic signals

- Intent recognition: Traditional tools match keywords. AI voice analytics infers what the caller actually wants, even when they phrase it in unexpected ways

- Coverage: Traditional tools sample a subset of calls. AI voice analytics analyzes 100% of interactions automatically

- Output: Traditional tools produce reports. AI voice analytics produces real-time alerts, agent guidance, and predictive insights

For most modern contact centers, AI voice analytics has replaced traditional speech analytics entirely. The older category still exists at the low end of the market, typically bundled with call recording systems, but buyers evaluating a new platform in 2026 should assume they're shopping in the AI voice analytics category.

How AI Voice Analytics Works

AI voice analytics takes your raw audio and transforms it into something actionable and realized in three simple stages. Each is powered by specialized AI modeling and processing techniques designed to extract the maximum out of each conversation.

Stage 1: Voice Capture & Transcription

Advanced voice analytics systems can handle the messy reality of real-world conversations. Audio is captured in real time (or from call recordings), noise suppression algorithms filter background interference, and volume fluctuations across different devices and channels are normalized.

Most importantly, AI voice analytics perform speaker diarization - the technology that understands who said what, and when. Speaker diarization identifies individual speakers in multi-party conversations, handles overlapping speech and interruptions, and detects rapid speaker changes.

Diarization measures agent talk time versus customer talk time in contact centers and applies sentiment scores to the right speaker. For multi-channel recordings where each speaker occupies a separate audio track, diarization is straightforward. For single-channel recordings (the majority of contact center calls), it's the most technically challenging step in the pipeline.

Automatic speech recognition (ASR) software has hit a new accuracy rate high of 95% for clear audio.[*] Modern ASR systems use transformer-based neural networks (like OpenAI's Whisper or Google's Chirp) that handle accents, industry jargon, and conversational speech patterns. Transcription latency ranges from near-instantaneous to 30-60 seconds for batch processing of recorded calls.

Transcriptions happen in just seconds, spoken word becomes timestamped text, and metadata is preserved as to who said what and when.

Stage 2: AI Models & Signal Processing

Once transcribed, natural language processing (NLP) models analyze the text to identify topics, detect compliance violations, classify question types versus statements, and map conversation flow. Natural language understanding (NLU) extracts intent and meaning from context.

When a customer says "I need to cancel my subscription next month because I'm moving abroad," NLU identifies the intent (cancellation request), timing (next month), and reason (relocation), enabling automated downstream actions.

Simultaneously, acoustic analysis processes the raw audio waveform to detect emotional indicators independent of spoken words:

- Prosodic features: Pitch variance, speaking rate (words per minute), voice energy, and rhythm patterns

- Acoustic fingerprinting: Converts unique voice signatures into comparable biometric patterns, enabling speaker verification, fraud detection, and cross-call voice matching

- Paralinguistic cues: Vocal tension, breathiness, tremor, pauses, interruptions, and speech disfluencies (um, uh, false starts)

Additional NLP capabilities include:

- Anomaly detection: Flags unusual language patterns, potential fraud indicators, or security risks

- Script adherence monitoring: Measures how closely agents follow required disclosures, greeting scripts, or compliance language

- Entity extraction: Identifies and redacts personally identifiable information (PII) like credit card numbers, social security numbers, account IDs, and addresses

Advanced sentiment models classify up to 15-20 distinct emotional states (frustration, confusion, urgency, satisfaction, anger, anxiety, excitement), moving far beyond basic positive/negative binary classifications.

Additional NLP capabilities include:

- Anomaly detection: Flags unusual language patterns, potential fraud indicators, or security risks

- Script adherence monitoring: Measures how closely agents follow required disclosures, greeting scripts, or compliance language

- Entity extraction: Identifies and redacts personally identifiable information (PII) like credit card numbers, social security numbers, account IDs, and addresses

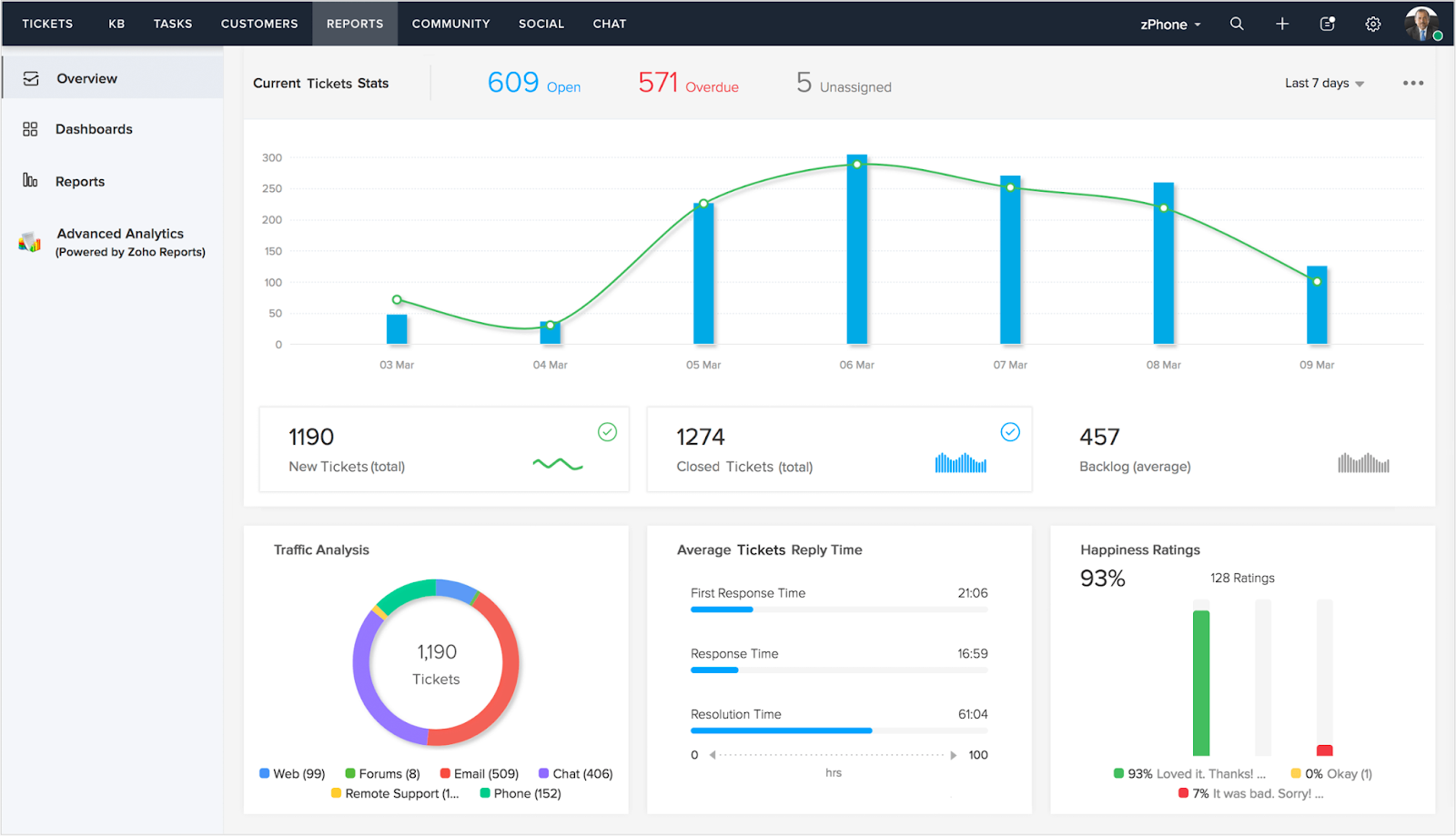

Stage 3: Data Visualization & Integration

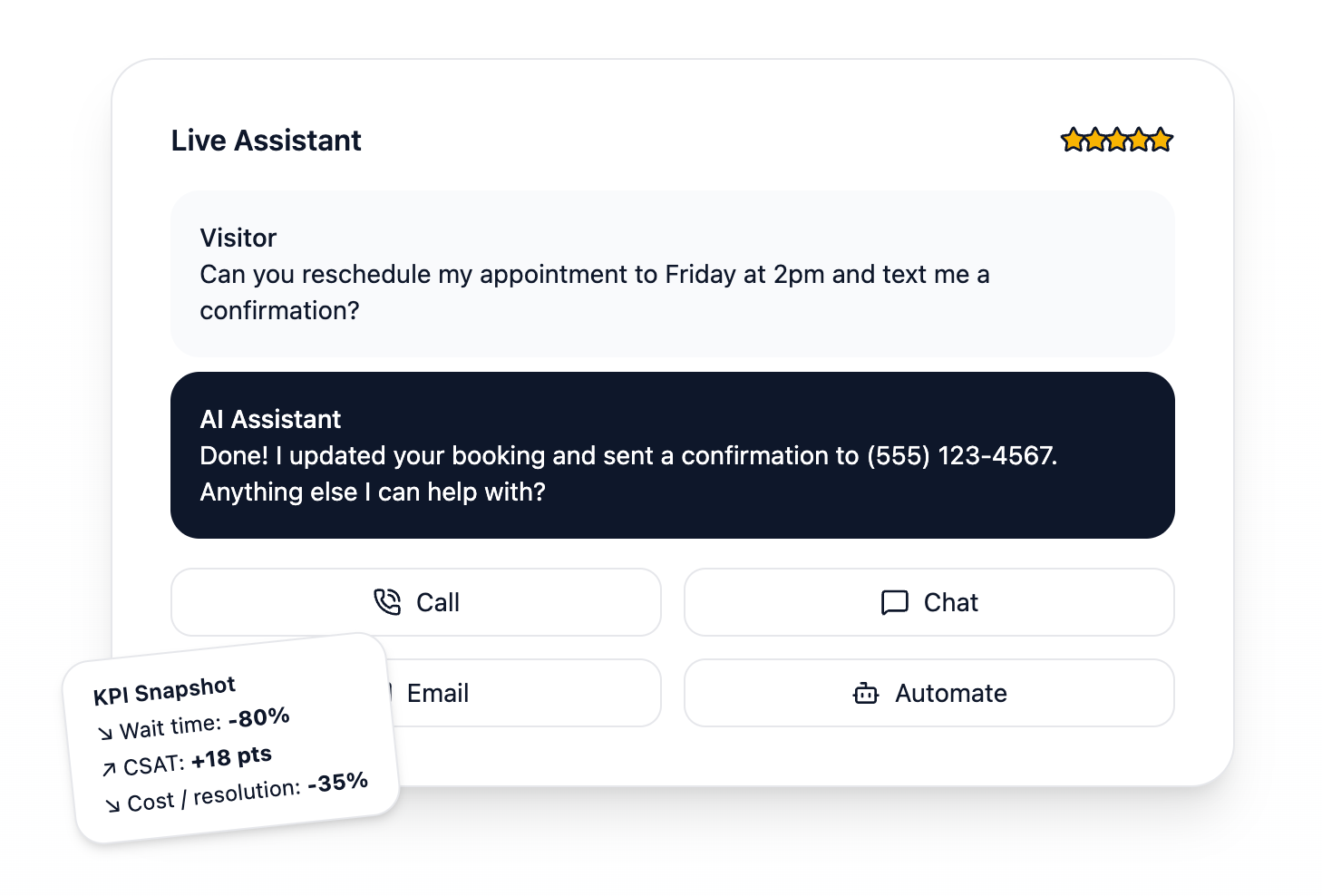

Analyzed data flows into customizable dashboards that surface patterns through sentiment timelines, topic clusters, keyword frequency charts, agent performance scorecards, and trend graphs. The system uses REST APIs, webhooks, or native connectors to integrate directly with existing business infrastructure like CRM platforms (Salesforce, HubSpot, Zendesk), contact center software (Five9, Genesys, NICE), workforce management tools, and business intelligence suites.

Real-time alerting notifies supervisors when conversations trigger predefined thresholds: sustained negative sentiment, compliance violations, competitive mentions, cancellation intent, or VIP customer escalations.

Automation triggers launch downstream workflows based on detected signals—updating CRM records, assigning follow-up tasks, routing calls to specialized teams, scheduling callbacks, or flagging accounts for retention campaigns.

Top AI Voice Analytics Solutions Compared

Below is a quick side-by-side of the platforms most commonly evaluated for AI voice analytics in the contact center and CX space.

| Platform | Best For | Key Strength | Pricing Model |

| Observe.AI | Enterprise contact centers | Real-time agent assist and Auto QA on 100% of interactions | Subscription, quote-based |

| Calabrio ONE | Workforce engagement and WFO | Conversation intelligence bundled with WFM and quality management | Subscription, quote-based |

| NICE | Large enterprise contact centers | Mature speech analytics with deep compliance tooling | Enterprise licensing |

| RingCentral (ACE) | Unified comms + conversation intelligence | AI Conversation Expert bundled into RingEX and RingCX | Add-on, per user/month |

| Dialpad | AI-first business phone system | Native real-time transcription and sentiment built into calls | Per user/month |

| Nextiva | All-in-one CX platform | Voice analytics across voice, chat, SMS, email, and social | Per user/month, AI add-ons |

| Zoom Phone | Zoom Workplace customers | AI Companion call summaries and voicemail prioritization | Included in paid Zoom plans |

Observe.AI

Observe.AI is a contact center AI platform that combines conversation intelligence, Auto QA, and real-time agent assist. It analyzes 100% of voice and chat interactions, supports 25+ languages through its VoiceAI agents, and integrates with 250+ third-party tools like CCaaS platforms and CRMs. Large contact center deployments at brands like Accolade and Prudential use it for compliance monitoring, coaching, and automated quality evaluation.

Key features:

- VoiceAI Agents for automating customer voice conversations end-to-end

- Agent Copilot for live guidance and response suggestions during calls

- Auto QA that scores 100% of interactions against custom scorecards

- Conversation Intelligence with sentiment, topic, and moment detection powered by multi-LLM orchestration

- Coaching Copilot with personalized agent development plans

- Omnichannel coverage across voice, chat, and email interactions

Pricing: Subscription-based, quote-only. Pricing scales with seat count, features selected, and interaction volume. No public pricing page.

Best for: Large enterprise contact centers in healthcare, financial services, insurance, and BPO that need automated QA across high call volumes, with compliance and coaching as primary drivers.

Calabrio ONE

Calabrio takes a workforce-engagement approach, packaging speech analytics, sentiment analysis, Auto QM, and workforce management in one suite. Its conversation intelligence layer uses GenAI for interaction summaries, trending topic detection, and advanced sentiment that separates agent and customer emotions. Best fit for teams that want analytics alongside scheduling, forecasting, and performance management rather than as a standalone tool.

Key features:

- Auto QM with intelligent phrase-match scoring and certified question library

- Advanced Sentiment that separates customer and agent emotions

- Trending Topics detection that surfaces emerging themes hourly

- Interaction Summary using GenAI for post-call wrap-up

- Real-Time Desktop Analytics for agent application usage

- Full WFM and quality management suite integrated with analytics

Pricing: Subscription-based, quote-only. Pricing is typically structured by seat count and feature bundle within the Calabrio ONE suite.

Best for: Contact centers that want conversation intelligence bundled with workforce management, forecasting, and scheduling in one suite, rather than running a separate analytics tool alongside a WFM platform.

NICE

NICE is the legacy enterprise player in the category. Its speech analytics tooling covers interaction recording, compliance monitoring, real-time authentication, and fraud detection, with particularly deep coverage for financial services and regulated industries. Pricing and implementation complexity scale with enterprise scope, which makes it a better fit for organizations with the resources to run a full deployment than for mid-market buyers.

Key features:

- Enlighten AI models trained on contact center interactions

- Compliance recording with multi-year retention for FINRA, MiFID II, and similar mandates

- Real-time authentication and voice biometrics for fraud detection

- Interaction analytics across voice, chat, and email

- Deep CCaaS integration via CXone

- Quality management and workforce optimization suite

Pricing: Enterprise licensing, quote-only. Contract size and implementation scope shape the final cost.

Best for: Large enterprises in banking, insurance, and telecom that need mature speech analytics alongside compliance recording, fraud detection, and workforce optimization at global scale.

RingCentral

RingCentral bundles voice analytics into its broader unified communications platform through AI Conversation Expert (ACE), the product formerly marketed as RingSense. ACE sits across RingEX (business phone) and RingCX (contact center) to transcribe, analyze, and extract insights from every call. The rebrand expanded ACE from a sales-focused tool into a horizontal conversation intelligence layer that serves sales, support, and operations teams.

Key features:

- AI Conversation Expert (ACE) for post-call insights, summaries, and scoring

- Real-time transcription across voice, video, and messaging

- Sentiment analysis and keyword/topic tracking

- Action item extraction and coaching recommendations

- CRM integrations with Salesforce and HubSpot for call logging

- Insights module with real-time visibility into customer sentiment and team performance

Pricing: RingEX plans run $20 to $35 per user per month (annual billing). ACE is offered as an add-on starting around $60 per user per month on top of the base RingEX subscription.

Best for: Teams already using RingCentral for their business phone or contact center who want to layer conversation intelligence onto existing call traffic without adopting a separate analytics platform.

Dialpad

Dialpad was built as an AI-first cloud phone system, and voice analytics is a native feature rather than an add-on. Dialpad Ai (also marketed as Voice Intelligence, or Vi) transcribes every call in real time, analyzes sentiment, and surfaces Real-Time Assist cards with suggested responses during live conversations. The company trained its own LLM (DialpadGPT) on billions of minutes of business conversation data, which gives the AI a language model tuned specifically to sales and support calls.

Key features:

- Real-time transcription during calls, searchable and archived automatically

- Ai Recaps with AI-generated call summaries, key points, and action items

- Real-Time Assist cards for live coaching and suggested responses

- Sentiment analysis flagging unhappy customers mid-call

- Custom company dictionary for industry-specific terminology

- Ai CSAT for automated customer satisfaction scoring

- Ai Coaching Hub for sales and support team development

Pricing: Dialpad Connect starts at $15 per user per month, with Voice Intelligence included. Dialpad Ai Contact Center starts at $80 per user per month. Higher tiers add agent coaching, business intelligence, and advanced analytics.

Best for: Sales and support teams that want real-time transcription, sentiment, and coaching built directly into every call, without paying extra for conversation intelligence as a separate product.

Nextiva

Nextiva's voice analytics sit inside its NEXT Platform, a unified customer experience product that covers voice, SMS, chat, email, social, and video in one dashboard. The recent platform overhaul puts AI at the center, with Agent Assist for real-time guidance, XBert as an AI employee for customer conversations, and a Voice Analytics add-on that unlocks wallboards, gamification, and full historical data. Sentiment analysis, topic insights, and CSAT tracking run across every channel natively.

Key features:

- Cross-channel analytics covering voice, SMS, chat, email, social, and video

- Sentiment analysis, topic insights, and CSAT/NPS tracking

- Live transcription and AI-generated call recaps with action items

- Agent Assist for real-time guidance during customer conversations

- XBert AI employee for automating inbound interactions

- Voice Analytics add-on for wallboards, gamification, and full historical data

- 250+ report templates with scheduled email delivery

Pricing: NEXT Platform plans start at $15 per user per month on the Core tier (annual billing, new-customer pricing). Engage plans run $25 to $50 per user per month. XBert AI and advanced AI capabilities start at $99 per month as an add-on.

Best for: Small and mid-size businesses that want conversation analytics alongside omnichannel customer engagement, without buying a standalone analytics product on top of their existing phone system.

Zoom Phone

Zoom Phone's voice analytics are delivered through Zoom AI Companion, which is included at no additional cost for paid Zoom Workplace customers. AI Companion joins Zoom Phone calls to transcribe in real time, generate post-call summaries, prioritize voicemails by intent, and answer mid-call questions through an in-app panel. It's not a dedicated contact center analytics platform, but for Zoom customers the bundled access removes a decision point.

Key features:

- Call Summary with AI Companion for post-call summaries and action items

- Live transcription during Zoom Phone calls

- Voicemail Prioritization based on user-defined intents

- Voicemail Task Suggestions that turn messages into trackable tasks

- Real-time Q&A on the active call transcript

- Auto language detection and translation for transcripts, SMS, and voicemails

- Smart recording with AI-generated highlights and chapters

Pricing: AI Companion is included at no extra cost for paid Zoom Workplace plans (Pro, Business, Enterprise). Zoom Phone plans start at $10 per user per month (US & Canada Metered) and $15 per user per month (US & Canada Unlimited), with Workplace+Phone bundles from $18.33 per user per month.

Best for: Organizations already standardized on Zoom Workplace and Zoom Phone who want conversation intelligence bundled into their existing subscription rather than procuring a separate analytics vendor.

Benefits of AI Voice Analytics

Your organization should implement voice analytics to get accurate reporting on measurable improvements across CX, operational health, and risk management. This technology takes into account your biggest thorns in comprehending voice interactions at scale. Here are some immediate benefits of AI voice analytics adoption:

Customer Sentiment Understanding

AI voice analytics helps you uncover how customers actually feel about you by analyzing emotion, intent, and perceived satisfaction across every stage of a conversation. The biggest advantage is catching frustration before a customer outright complains and spotting satisfaction even when post-call surveys go unfinished.

Signals like tone shifts, speech pace, interruptions, and specific phrasing all feed into a real-time read on sentiment. Companies that use sentiment analysis report 15-20% improvements in customer satisfaction scores (CSAT) just by acting on emotional cues that would otherwise go unnoticed.[*]

Agent Performance Improvement

Real-time coaching and automatic quality assurance (QA) change how organizations develop their teams. Supervisors get immediate notifications when agents need help, AI identifies top performers, and the system parses the specific behaviors that make them successful so those patterns can be taught to the rest of the team. Every call becomes a coaching opportunity instead of a random sample.

Real-time coaching in modern platforms takes a few specific forms:

- Live prompts pop up in the agent's interface when a customer mentions a competitor, asks about pricing, or shows signs of churn risk.

- Suggested responses surface relevant knowledge base articles based on the topic of the conversation as it unfolds.

- Compliance alerts flag when a required disclosure hasn't been read, or when an agent uses restricted language.

- Supervisor escalation triggers automatically alert when sentiment drops past a threshold, allowing a manager to listen in or join the call before the customer disengages.

The system will automatically score interactions on your own personalized quality criteria, freeing up your QA teams to uncover insights on more complex cases. Organizations say they get up to 40% reductions in average handling time after using AI-powered coaching programs.[*]

Operational Excellence and Efficiency

By looking into every conversation automatically and systematically, your team eliminates the very real bottleneck that are manual call reviews. Teams that only look into say 50 or 100 calls max per month now get information from thousands of interactions from all sorts of channels. It makes for more comprehensive coverage that reveals patterns that would not show up in smaller samples, spots systemic issues speedily, and even allows for a more data-driven resource allocation methodology overall.

Voice-of-the-Customer Insights

Conversation data is aggregated to underscore the following key factors from your interactions:

- Customer pain points

- Emerging product issues

- Service quality feedback

- Relevant market trends

- Competitor mentions and positioning

Product teams see which features customers actually care about, marketing learns which messages resonate, and executives hear authentic brand perception across thousands of real conversations. Don't let survey assumptions cloud real-world evidence when planning. Voice data reflects what customers say unprompted, which is almost always more honest than what they check on a form.

Compliance and Risk Detection

Automated monitoring flags potential regulatory violations, risky statements from agents, and inappropriate language as the words are spoken. AI voice analytics verifies that required disclosures are read, catches prohibited topics, and proactively identifies conversations that need legal review — protecting your business from liability. Financial services firms use voice analytics to monitor 100% of recorded calls for compliance, versus the 1–2% that's realistic with manual review, dramatically cutting regulatory risk while maintaining audit trails that can surface bad-faith actors in seconds.

Compliance recording is the practice of capturing and retaining calls to meet regulatory requirements, and it has industry-specific rules that voice analytics platforms need to support:

- GDPR (EU) requires a lawful basis for recording calls with EU residents ( typically explicit, informed consent) along with the right to erasure, clear retention policies, and data processing agreements with any vendor handling the recordings.

- PCI-DSS requires pause-and-resume recording or real-time redaction during payment card data capture, so that full card numbers and CVV codes never enter the recording or transcript. Storing CVV data post-authorization is prohibited outright.

- HIPAA requires encrypted storage (at rest and in transit), strict access controls, tamper-evident audit trails, and a signed Business Associate Agreement (BAA) with any vendor handling calls containing protected health information.

- FINRA Rule 3110 and SEC Rule 17a-4 require broker-dealers to retain electronic communications for at least three years, with the first two in an easily accessible format. MiFID II mandates five-year retention for investment-related calls in the EU, extendable to seven years at regulator request.

- Two-party consent laws in states like California, Florida, Illinois, and Pennsylvania (along with the federal TCPA ) require clear notification or explicit consent before recording begins, which matters for any US business with a multi-state customer base.

Voice analytics platforms built for regulated industries typically ship with consent management, automated redaction of sensitive data (PII, PHI, PCI), role-based access controls, tamper-evident audit logs, and configurable retention policies out of the box.

For global deployments, verify that the platform supports data residency controls so EU, UK, US, and APAC recordings stay in their required regions. Confirm it holds the certifications your industry expects: SOC 2 Type II, ISO 27001, HITRUST (healthcare), and PCI-DSS Level 1 (payments)

Top Use Cases Across Industries

AI voice analytics has found its place across many industries, each with its own demands and ways to apply the technology to settle industry-specific issues. The examples below show how voice analytics transforms operations and outcomes in each sector while complying with regulatory requirements and best practices.

Contact Centers and CX

Contact centers have the most mature application of voice analytics. Leading operators leverage the technology for quality assurance, agent coaching, and emotion detection across millions of annual interactions. Systems provide real-time guidance mid-call and surface relevant knowledge base articles the moment a customer shows confusion.

Two capabilities separate mature deployments from basic ones:

- Automated quality assurance (Auto QA): Scores every interaction against custom scorecards instead of sampling 1-2% manually. Every agent is evaluated on every call, and coaching decisions rest on comprehensive data rather than random samples.

- Searchable transcript libraries: Let QA analysts and supervisors filter across millions of conversations by keyword, sentiment, topic, outcome, or agent to surface specific examples in seconds, turning historical call archives into a queryable knowledge base.

When a call goes sideways, the system alerts supervisors and recommends next-best actions based on similar past interactions that ended successfully. According to SQM Group's FCR benchmarking research, only 5% of contact centers reach the world-class first-call resolution benchmark of 80% or higher, and AI-driven coaching is one of the highest-leverage tools for closing the gap.

Healthcare

Healthcare applications focus on cognitive decline detection through conversational voice analysis. AI monitors tone, vocabulary complexity, speech patterns, and conversational coherence over time to detect decline or stability. Clinicians use this technology to catch early signs of dementia, Alzheimer's, and other cognitive conditions, enabling earlier intervention when it matters most.

A 2025 NIH study published in Alzheimer's & Dementia found that machine learning models trained on speech biomarkers can detect mild cognitive impairment with an AUC of 0.967, offering a non-invasive monitoring option that complements traditional assessments and tracks patient progress between clinical visits.

Other healthcare voice analytics use cases include:

- Clinical documentation: Ambient scribing during patient encounters reduces physician administrative burden and frees up face-time with patients

- Patient satisfaction monitoring: Sentiment analysis across call center interactions identifies dissatisfaction trends earlier than survey data

- Telehealth risk flagging: AI detects vocal stress, confusion markers, or speech anomalies during virtual visits that may indicate clinical concerns

Financial Services

Banks and investment firms use voice analytics for fraud detection, combining voice biometrics with behavioral pattern analysis to verify that customers are who they claim to be. Compliance monitoring ensures regulated conversations meet legal requirements by:

- Verifying required disclosures are read accurately and in full

- Detecting potential insider trading discussions

- Flagging manipulative or high-pressure sales language

- Identifying account takeover attempts and coercion scenarios (for example, an elderly customer being instructed by a scammer in the background)

Industry research reports that financial institutions implementing voice biometrics combined with behavioral analytics see fraud reduction compared to traditional knowledge-based authentication. HSBC's voice biometric program, one of the largest deployments globally, has enrolled over 15 million customers and analyzes 100+ unique voice characteristics per authentication.

Sales and Revenue Teams

Sales teams using AI voice analytics see meaningful win rate gains by replicating the behaviors that win deals and closing the gaps revealed through comprehensive conversation analysis. Gong research found that sellers who use AI to guide their deals increase win rates by 35%, and a Gong Labs study of 7.1 million sales opportunities showed organizations embedding AI as a core part of their go-to-market strategy are 65% more likely to increase win rates than competitors treating it as optional.

Conversation intelligence platforms parse sales calls to identify what separates won deals from lost ones, automatically detecting:

- Buying signals and intent indicators

- Objections and how top reps handle them

- Competitor mentions across the deal cycle

- Pricing and discount discussions

- Effective closing techniques and patterns

Sales leaders can see which talk tracks actually convert, how top performers turn objections into wins, and when prospects show genuine interest versus polite engagement. The same data flows into onboarding: new reps learn from the best calls in the library instead of waiting weeks to shadow senior reps live.

Choosing an AI Voice Analytics Solution

Finding the right AI voice analytics platform means weighing the factors that affect both day-one usability and long-term viability.

Accuracy and Model Quality

Transcription accuracy directly impacts every insight downstream, so treat it as the foundational element of any voice analytics system. Evaluate solutions on how well they handle industry-specific terminology, regional accents, and variable audio conditions. Real-time analysis matters for use cases that require immediate action; post-call analysis is often sufficient for retrospective QA.

The most reliable ASR systems achieve over 95% accuracy on clear audio but can drop to 75-80% on heavy accents, noisy environments, or overlapping speakers. Always test with your own audio samples before committing, as vendor demos are rarely representative of real-world call quality.

Integration Capabilities

Your chosen platform must connect reliably to your existing tech stack: CRMs, contact center platforms, data warehouses, and BI tools. Native integrations ensure data flows automatically without custom development work, while open APIs enable specialized workflows. Consider whether the solution pushes insights into tools your team already uses daily, or whether it forces them to adopt yet another dashboard.

Key integration points to verify: CCaaS platforms (Genesys, NICE CXone, Five9, Talkdesk), CRMs (Salesforce, HubSpot, Dynamics), collaboration tools (Slack, Teams), and data warehouses (Snowflake, BigQuery, Redshift) for long-term analytics.

Security and Compliance Considerations

Voice data often includes sensitive information, which demands robust security controls. Verify that providers offer:

- Encryption at rest and in transit

- Detailed data retention policies covering how long data stays on third-party servers and where

- Compliance certifications appropriate to your industry (SOC 2 Type II, ISO 27001, HIPAA, PCI-DSS, GDPR readiness)

- Role-based access controls and audit logging

Security and compliance are often the deciding factors for executive buyers in regulated industries, so confirm certifications upfront before investing time in deeper evaluation.

Scalability and Performance

Check whether the system handles your current call volumes and can grow without degradation. Cloud-based solutions typically offer elastic scaling, while on-premise deployments may require capacity planning to maintain quality at scale. Processing speed matters because real-time guidance depends on sub-second analysis; overnight batch processing is fine for retrospective QA but won't help an agent mid-call.

Ask vendors for concrete benchmarks: concurrent call capacity, transcription latency, and how the system behaves during peak loads.

Ease of Use

The best analytics in the world aren't worth much if nobody uses them. Evaluate dashboard intuitiveness, customization depth, and whether non-technical users can find insights without needing engineering support. Implementation complexity matters too, as solutions requiring months of professional services delay time-to-value compared to platforms offering rapid deployment.

Pricing Models

AI voice analytics platforms use several different pricing structures, and the right choice depends on your call volume and team size:

- Per-seat licensing is standard for contact center suites like Observe.AI and Calabrio, typically quoted against your agent headcount.

- Per-minute pricing suits lower volumes or API-first deployments like Deepgram or AssemblyAI, where you pay only for audio actually processed.

- Enterprise licensing is common at the NICE and Verint tier, with annual contracts and bundled support.

- Bundled flat-rate pricing is what you'll see from cloud phone systems like Aircall or Dialpad, where lighter voice analytics features come included with the per-user phone subscription rather than priced separately.

When budgeting, factor in implementation services, connector fees for CRM and CCaaS integrations, storage costs for recording retention, and any per-minute charges for real-time transcription. Each can meaningfully change total cost of ownership.

Risks and Tradeoffs

While AI voice analytics offers immediate opportunities for savings and insights, organizations need to take into account several important considerations before diving off the deep end and risking serious privacy and ethical violations. Here are some of these issues:

- Privacy and compliance: Handling sensitive audio data means keeping GDPR, HIPAA, and other regulations in mind. It also means a fine-tooth comb approach to consent, data retention policies, and cross-border restrictions concerning data transference. Organizations need to weigh analytical value against privacy obligations. All the while, considering implementation of controls and transparently telling customers what information they have

- Accuracy and language coverage: Dealing with accents, dialects, and multilingual environments can be too much for even the most robust ASR technology. Models that were only trained to handle standard dialects may struggle when it comes to regional variations. Mislabeled or underreported data often leads to bias in insights or coaching recommendations

- Infrastructure and scale: Processing thousands of voice data samples in real time demands heavy resources on a computational level. Organizations must look into which of the two, cloud versus on-premise deployment, makes most sense monetarily and operationally. This also means managing costs as call volumes grow, as reliability becomes king when real-time guidance depends on consistent performance

- Model bias and ethics: Ensuring fairness and avoiding discriminatory outcomes means actively looking for sources of bias within your existing systems. Emotion detection paradigms are not always perfectly calibrated to comprehend and cater to all genders, ages, or cultural backgrounds sadly. Not looking for these flaws will lead to unfair agent evaluations or customer treatment. To offset these, you will need regular bias testing and training data built on diversity

- Change management: Integrating analytics insights into workflows effectively requires more than technology deployment. Agents may resist AI monitoring, managers need training to interpret insights productively, and organizations must develop processes that turn data into action rather than generating reports nobody uses

You will only be successful if you take these challenges seriously through wise and transparent implementation planning, ongoing monitoring, and committing your team to following processes. In doing so, you use insights responsibly and shore up well-earned customer trust.