Whether you love it, fear it, or are just tired of hearing about it, there’s no denying the Age of AI is officially here. Though our feelings about AI technology may not stop the space race-esque competition between AI providers, exploring public perceptions of AI will help business owners learn the best way to leverage it without losing consumer trust.

What do your customers really think about Artificial Intelligence–and how would they react if they knew how much you relied on it? How do opinions about AI change across generations, industries, and use cases? What scares and excites consumers the most about AI?

I analyzed dozens of recent studies, surveys, whitepapers, and polls to find out–let me share what I discovered with you.

Trust In AI Varies By Generation

Key Takeaway: Because younger generations (Gen Z and Millennials) have more experience with and knowledge of AI applications, they are more likely to trust it than older adults (Boomers/Silent Generation) who are less familiar with AI.

Perceptions of AI vary by generation and are influenced by each age group’s familiarity and hands-on experience with AI. There is a direct correlation between older generations’ lack of knowledge about AI technology and their distrust of it.

According to the 2024 KPMG Generative AI Consumer Trust Survey, while 74% of Gen Z and Millennials say they’re “extremely” or “very” knowledgeable about AI, only 13% of Boomers and Silent Generationists say the same. 43% of older adults say they’re “not too” or “not at all” knowledgeable about AI, compared to just 5% of younger generations. Further, 69% of Gen Zers and Millennials say GenAI significantly impacts their personal lives, compared to only 9% of Boomers and Silent Generationists.꙳

Perhaps this is why older adults are less likely to trust AI than younger ones–and why Boomers and Silent Generationists are more worried about the overall risks of AI and the lack of federal regulations surrounding it than Gen Zers and Millennials.

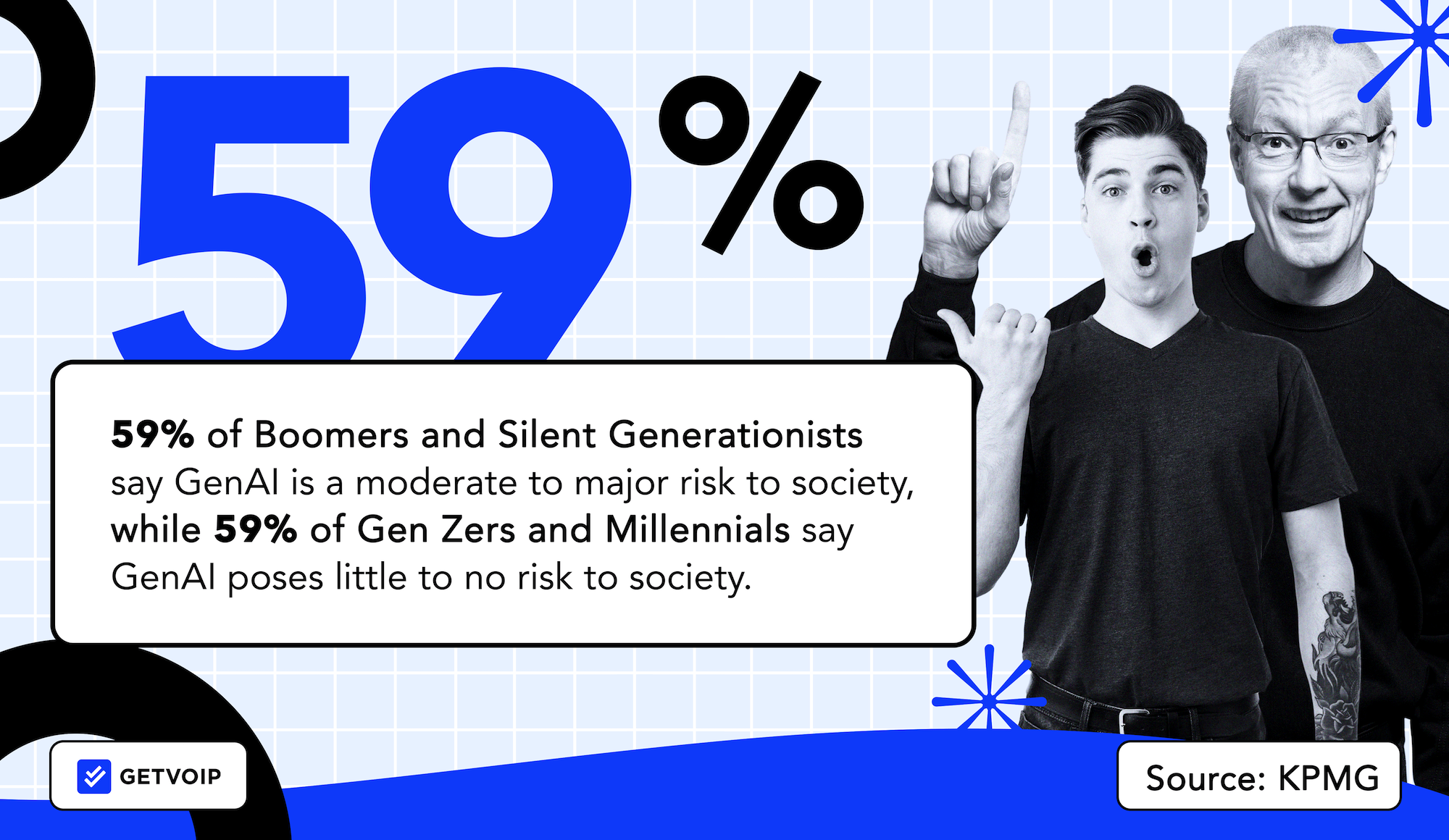

Of the Gen Zers and Millennials polled in the KPMG study:

- 80% say GenAI offers more benefits than risk

- 59% say GenAI poses little to no risk to society

- 88% trust organizations using GenAI in daily operations

- 60% say there is either enough or too much federal regulation around AI

Of the Boomers and Silent Generationists polled in the KPMG study:

- 35% say GenAI offers more risks than benefits

- 59% say GenAI is a moderate-to-major risk to society

- 55% trust organizations using GenAI in daily operations

- 65% say there is not enough federal regulation around AI

While intergenerational opinions about AI vary by use case, younger generations are still more comfortable with the use of AI than older ones.

And if anything, the gap has grown far wider. A recent survey found that only 5% of Americans state they trust AI “a lot.” Confidence amongst the over 55 adult demographic sits lowest at 44% saying they distrust it.[*]

A Pew Research study from 2025 showed that 50% of Americans are more concerned than excited about AI use in their daily lives. That’s starkly up from the 38% just 4 years ago.[*] Yes, younger generations are more optimistic but even they’re getting weary about AI within higher-stakes decisions.

Consumers Want Better AI Regulations

Key Takeaway: With distrust in AI trending upward, American consumers want standardized federal regulations for AI–especially relating to transparency of use, data privacy, and accountability for bad actors.

As AI continues to evolve, consumer distrust in it grows. The percentage of American adults who believe there should be more of an investment in AI assurance measures grew by 11 points in one year (2022-2023). Now, 72% want the federal government to invest more time and funding into researching and developing AI security standards.

This desire for federal AI regulation is a direct result of continually decreasing consumer trust in AI. From 2022 to 2023, there was a 9-point drop in the percentage of adults who agree with the statement “I believe today’s AI technologies are safe and secure,” and a 7-point drop in the percentage of those who believe AI primarily exists to “assist, enhance, and empower consumers.

A recent Ipsos report found that as many as 3 out of 4 Americans believe the government needs to take action to prevent AI-induced job losses.[*] More than half believe AI usage will lead to worsened income inequality and a more polarized society. Even across party lines, Republicans and Democrats are aligned on nothing else but wanting AI oversight.[*]

Also worth mentioning is that in August of 2024, the European Union instated an AI act becoming the world’s first comprehensive legal framework for AI.[*] In the two years that have followed, there has been no equivalent for Americans causing further anxieties as to whether governmental bodies intend to regulate AI use meaningfully.

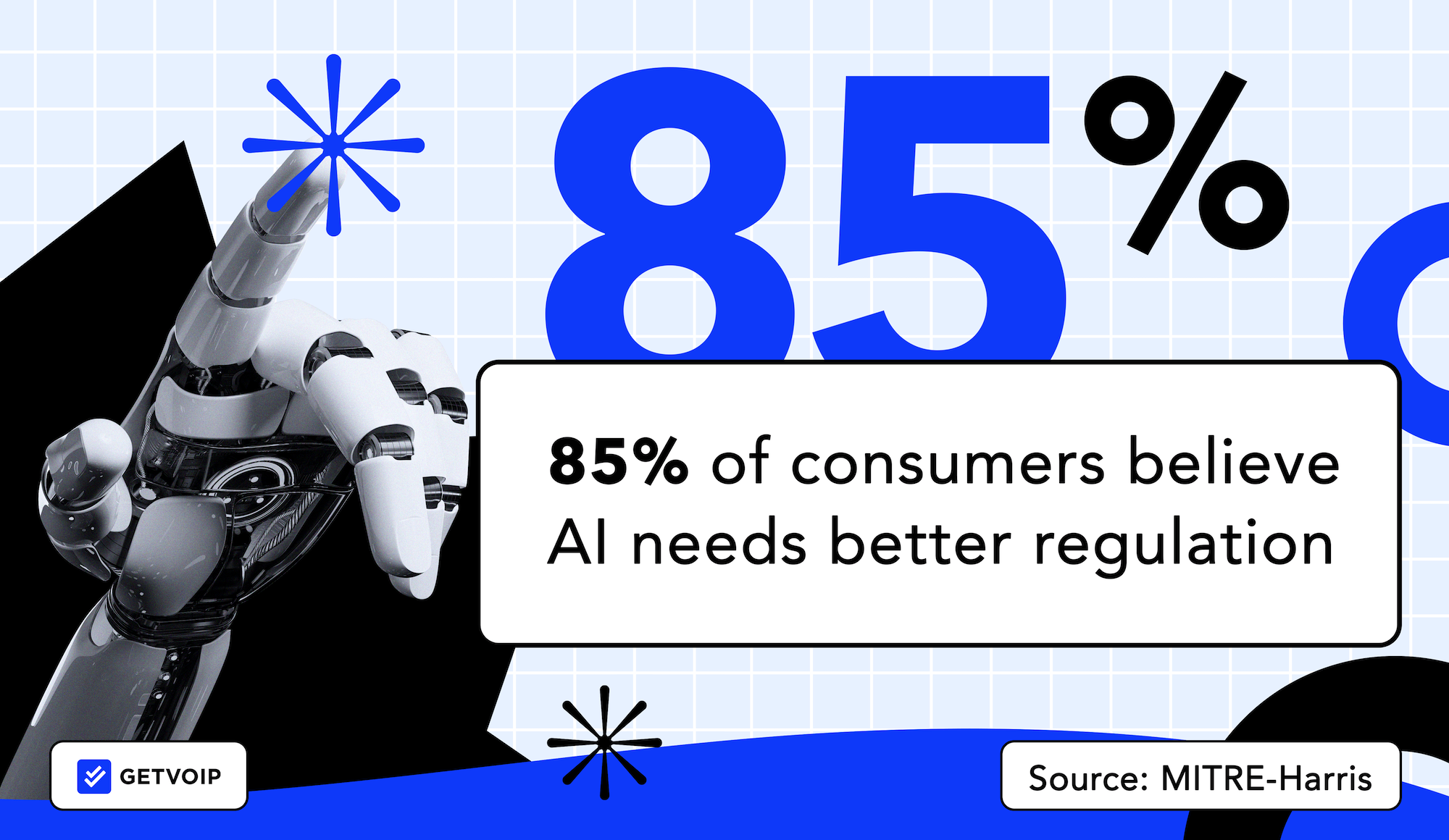

Now, over 80% of consumers agree that:

- AI technologies need to be regulated to protect consumers (85%)

- Businesses need to share AI assurance practices transparently before selling AI-powered products (85%)

- Businesses that invest in AI products need to contribute to AI assurance (85%)

- Making AI safer for the public must be a federal effort across government, academia, and industry (85%)

- Those developing AI tools should coordinate with one another and share data to make them safer (84%)

While these numbers are enough to make any business owner think twice before leveraging AI, the good news is that consumers aren’t blaming a lack of AI regulations on the businesses using them. Instead, they’re more concerned with AI technology developers.

65% of consumers say they still trust a business that leverages AI, while an additional 21% say the use of AI tools doesn’t impact their trust in a business at all. [*]

Top Consumer Concerns about AI

Key Takeaway: While online discourse and headlines may make us think job displacement is the number one concern about AI, in reality, consumers are more worried about AI’s role in cyberattacks, scams, and misinformation.

The above data clearly illustrates the American public’s growing concern about AI: but what exactly are they so worried about?

Unsurprisingly, one of the major concerns amongst those surveyed is that AI is going to take everyone’s jobs. A recent Gallup poll showed that 71% of Americans say they are concerned that too many people will lose their jobs to AI advancements.[*]

Instead, consumers are much more worried about AI’s roles in: [*] [*]

- Malicious cyberattacks (80%)

- Political chaos caused by U.S. rivals (77%)

- Identity theft (78%)

- Collecting and selling personal data (76%)

- Autonomous vehicles (73%)

- Core infrastructure decisions (70%)

- Deceptive political ads (74% across the political spectrum)

- Replacement for in-person relationships (66%)

- Fake news/content (67%)

- Increased electricity consumption (61%)

- Scams/phishing schemes (65%)

Just like generalized AI anxiety, more specific concerns about AI are also trending upward.

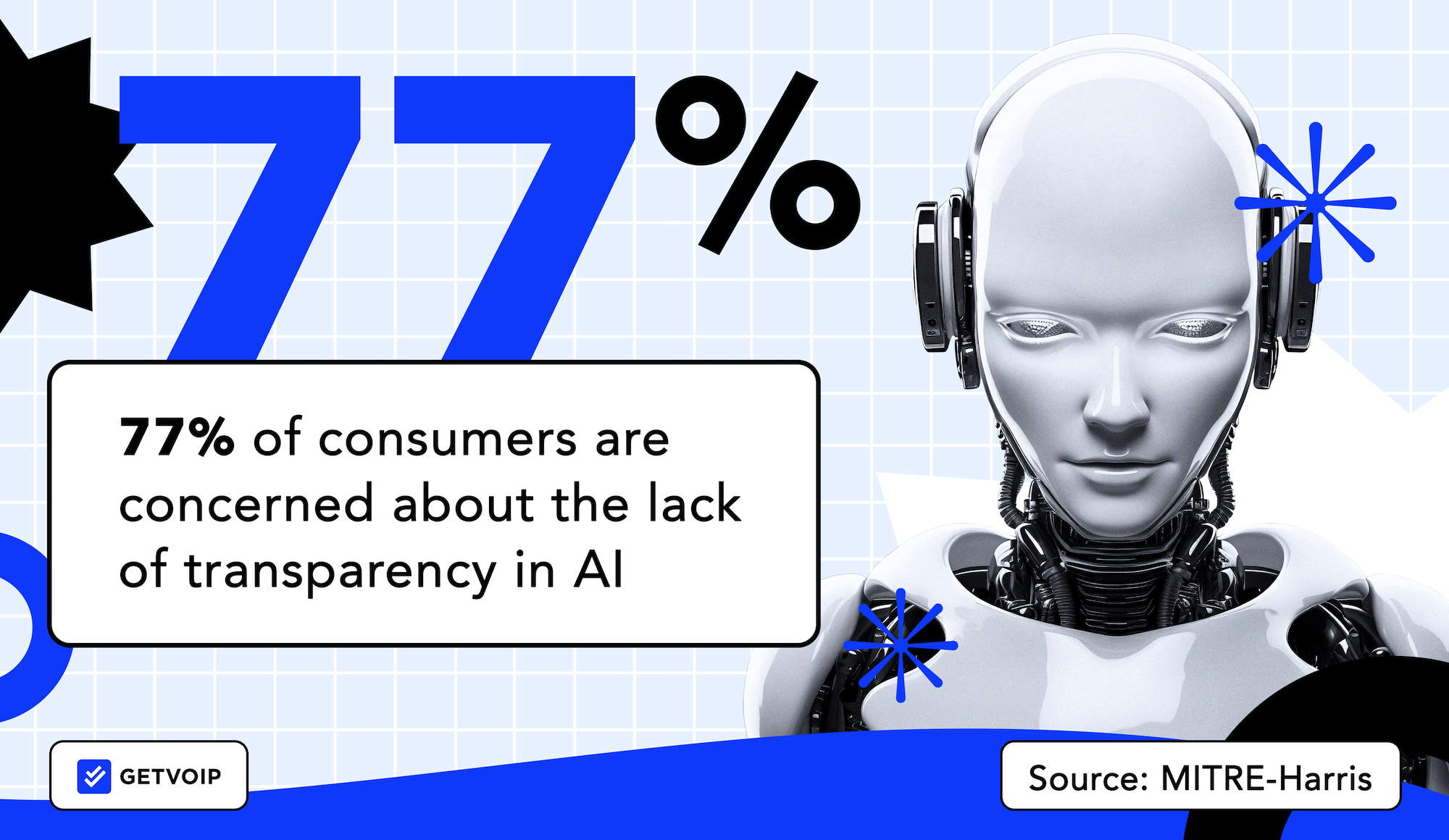

In 2023, 77% of consumers reported being concerned about the lack of transparency in AI (up from 69% in 2022), and 70% reported being concerned about AI creating and expanding social biases (up from 62% in 2022). [*]

Deepfakes have become a pain point that can’t be ignored. According to a recent McAfee report, Americans are spending 114 hours on average a year determining if messages are real or scams, partially thanks to AI.[*] The same study shows Americans report seeing an average of three deepfakes per day. More than 1 in 3 Americans are unsure in identifying deepfake scams, with 10% saying they have experienced a voice-clone scam.

How Consumers Feel About AI Generated Content

Key Takeaway: Consumers have become pointed about where they feel AI-generated content is acceptable and where it isn’t. They may come to forgive it in customer service, but are not keen on it in journalism, healthcare, advertising, or anything personal. Trust is at a crossroads.

In 2025, Merriam-Webster’s word of the year was “slop.”[*] Mostly in reference to AI slop. Low-effort, AI-generated content that has infected social feeds, inboxes, and messaging threads. This “AI slop” perception has played a role in distrust in AI as it becomes part of advertising efforts. New research from IAB shows a gap where 82% of advertising executives saying they believe Gen Z and Millennial consumers feel positive about AI-generated ads and content. Only 45% of these consumers said they were.[*]

There’s a key difference between AI recommending a playlist and allowing it to construct news articles. Recommendations on shopping are not equal to AI responding to a patient's diagnosis questions or sending a “personalized” birthday message on behalf of a brand. Lines are being drawn on where AI responses are fine and where they come off as “creepy” or “impersonal.”

According to a Salesforce survey of over 15,000 consumers, 60% say AI advances make trust more important than ever, with 44% saying they have their suspicions.[*] SurveyMonkey’s most recent study shows most consumers are happy to use AI to order food and drink (65%) or return an item (59%). This number plummets when it comes to medical advice or investment advice. Over two-thirds say they are uncomfortable using AI for those tasks (69% against medical advice, 68% against investment advice).[*]

The trust burden falls under businesses choosing to deploy AI, not the developers behind them. The 2025 Edelman Trust Barometer shows that only 44% of people globally trust businesses using AI. In the US, that number drops to just 32%.[*] Customers are not wholesale rejecting AI experiences, but they are signalling they want trustworthy ones. Right now, they’re showing lower trust.

Perceptions of AI By Use Case

Key Takeaway: Perceptions of and trust in AI vary according to industry and specific use case. Consumer trust in AI is highest when used as an educational resource, to make personalized recommendations, or to provide customer service–but is lowest when used to power self-driving cars and make investment decisions.

Provider and Patient Perspectives on AI In Healthcare

Overall, patients are much more enthusiastic about the use of AI in healthcare than medical professionals–but both patients and providers say their sense of trust in AI varies according to its specific use case within a healthcare setting. Patients and providers agree that AI can’t replace a human medical professional’s expertise, experience, or bedside manner–and both question the reliability and accuracy of AI-generated diagnoses and treatment plans.

A 2023 Tebra survey on Perceptions of AI In Healthcare found that 8/10 patients believe AI can improve the quality and accessibility of healthcare while lowering its costs.꙳ 57% of Americans say GenAI’s greatest impact will be improving people's mental and physical health–and ¼ of patients say they wouldn’t visit a healthcare provider that didn’t leverage AI technology.[*] Patients cite faster access to in-person medical care, telemedicine, and a reduced risk of human error in medical decisions as the biggest reasons to visit a provider using AI.

In stark contrast, only 26% of medical professionals say they’re “excited” about integrating AI into the medical field–and 42% are “not excited” at all. While medical professionals plan to use AI primarily for data entry (52%), appointment scheduling (42%), and patient communication (36%), their biggest concerns are the loss of human interaction (55%), patient data privacy (50%), and provider over-reliance on AI (49%.) Providers are less likely to use AI for remote and in-person patient monitoring, diagnosis, treatment, or as virtual medical assistants. Instead, they say AI will help improve efficiency and deliver cost savings via task automation.

Still, 53% of Americans agree that AI can’t replicate the experience of visiting a real live medical professional–and 47% are concerned that AI may generate inaccurate diagnoses and treatment plans.

Patient enthusiasm is nuanced. A 2024 Wolters Kluwer Health survey illustrates that 58% of patients are fine with AI assisting a doctor, but that drops to 32% when AI is making autonomous decisions.[*] Patients seem to want AI as a co-pilot, but not the main provider. This messaging might be key to healthcare providers wading further into AI integrations.

In 2026, nurses are signaling they use GenAI both in personal lives (58%) and on the job (46%). 45% report it could help reduce nursing staff burnout by automating away rote tasks like documentation or triaging routine patient questions. That being said, 53% say they worry AI could undermine their decision-making skills or lead to overreliance on algorithmic output.[*]

Therefore–for now, at least–AI’s biggest impact on healthcare isn’t performing open-heart surgery or using GenAI voicebots to talk people off building roofs. Instead, it’s streamlining the patient onboarding/scheduling process and automating exhausting paperwork.

Public Perceptions of AI in Finance

Overall, American consumers are hesitant about AI’s role in banking and finance and don’t trust AI technology to manage their money or correctly identify them at the ATM (via facial recognition.) According to findings from a 2024 JD Power survey of 4,000 nationwide retail banking customers, 64% of people say the use of AI in financial services puts them at somewhat of a risk level, while 20% think the tools expose them to extreme risk. [*]

Though banks currently offer tools that leverage AI to find and compare savings rates, loans, and investments, only 11% of customers surveyed say they currently use these tools–and between 14-26% of respondents say they never will.

While consumer trust in AI-powered banking applications is currently low, the majority of those polled say they’re willing to try out the technology once it’s been proven to work. Public perception is the most positive about AI banking apps that help customers avoid fraud, with 21% of respondents saying they’re already using banking fraud prevention tools and 36% saying they would try them out immediately.

If banks and other financial institutions can prove to their customers that AI-powered banking apps are secure, 33% of consumers would be willing to try out tools that automate investment portfolio management, make investment recommendations, or offer suggestions on how to save money.

Fraud prevention is a key arena where consumers are signalling they may want more AI banking applications. A recent study by Jumio showed that 69% of customers say AI-powered fraud protection would raise their trust level in their chosen bank.[*] This is the highest-trust use case in the sector. It could be an opening for financial services businesses to sort out how to introduce AI to customers.

Consumer and Business Owner Perspectives on AI In Retail

On both business owner and consumer sides, the retail industry has the most positive perception of AI out of all the industries explored in this post.

Square’s 2024 Future of Commerce report found that 100% of business owners say technology and automation have improved their business, especially via automated order tracking (43%) and automated payroll/benefits (42%). 45% of retailers say adopting automation technology has increased employee retention and profits, and 47% say it has improved the customer experience. [*]

77% of retail shoppers are comfortable or neutral if AI is used to help improve their shopping experience, and 67% prefer automated tools over interacting with live staff for basic tasks like ordering out-of-stock products or checking inventory. Shoppers are most excited about retailers using AI to automate checkout (31%).

Consumers have the highest sense of trust in AI-generated retail tools that automatically tailor promotions and deals based on buying history (61%), generate product purchase reminders (58%), and virtual try-on/view in my space VR tools (56%).

Maintaining Consumer Trust in the Age of AI: Best Practices

While federal regulation of AI is on the horizon, as a business owner, it’s still your responsibility to use AI ethically, effectively, and transparently–or risk losing consumer trust in your brand.

Best practices for leveraging AI while maintaining customer trust are:

- Develop an AI Code of Ethics: Create an AI Code of Ethics for your business that outlines why, how, and when you’ll use AI–and why, how, and when you won’t. Identify the potential risks of using AI technology, routinely check for algorithmic bias and discrimination, and make it easy for customers to opt out of automated data collection. Overwhelmed? The OSTP’s Blueprint for an AI Bill of Rights is a great place to start.

- Prioritize human oversight: Though AI can do lots of incredible things, the truth is that most AI technology isn’t anywhere near ready to operate 100% autonomously (don’t believe me? Just try out a few AI tools yourself–the gaps will quickly become obvious.) Human oversight isn’t just an essential part of any effective AI strategy, it also ensures your business and customers get the most out of AI-powered tools like Agent Assist. Have a human review and edit AI-generated content, check it for accuracy and completeness, and continually monitor AI applications. Most of all? Make it easy for customers to talk to a real person when they need to.

- Be transparent about how you use AI: Your customers may not always be able to tell when they’re talking to an AI-powered voice or chatbot, but that doesn’t mean you shouldn’t tell them. The same goes for AI-generated blog content, product recommendations, customer service/support, etc.

- Protect customer data and privacy: Be transparent about what data you collect, how long you store it, and what you use it for. Ensure customers can easily opt-out, and always redact/anonymize sensitive customer data.